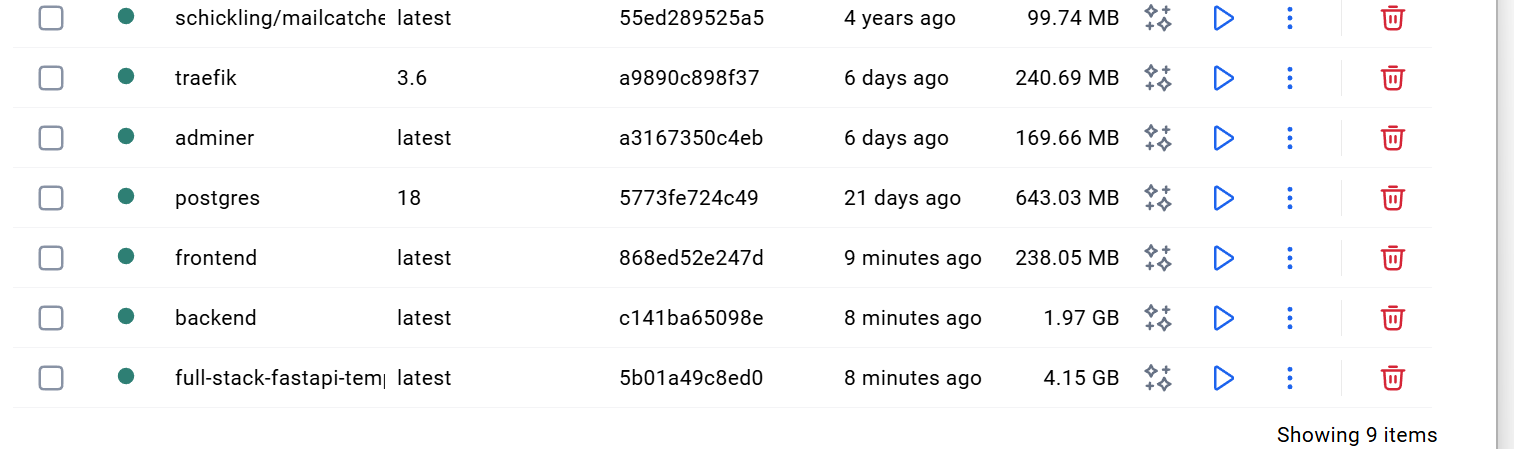

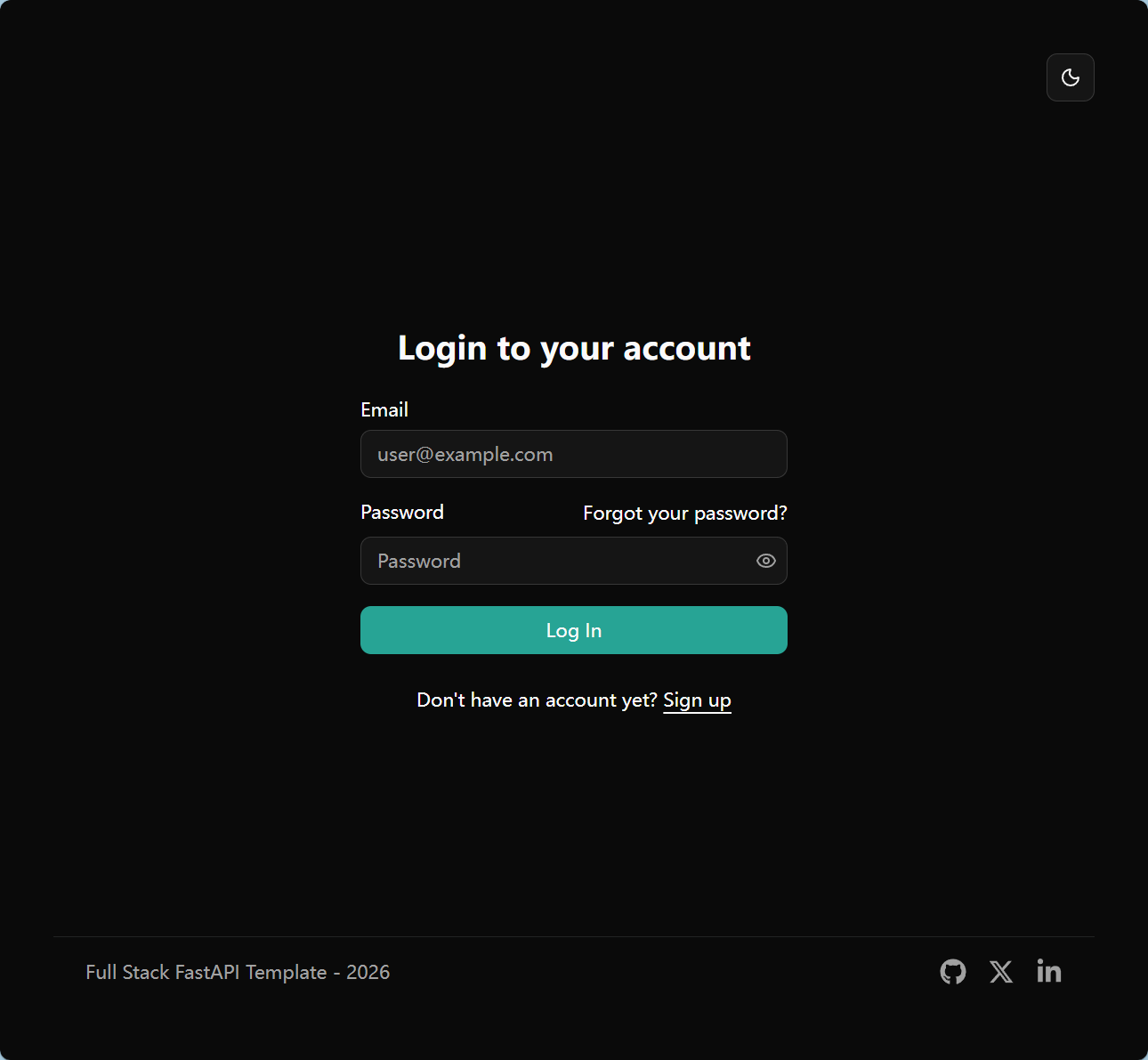

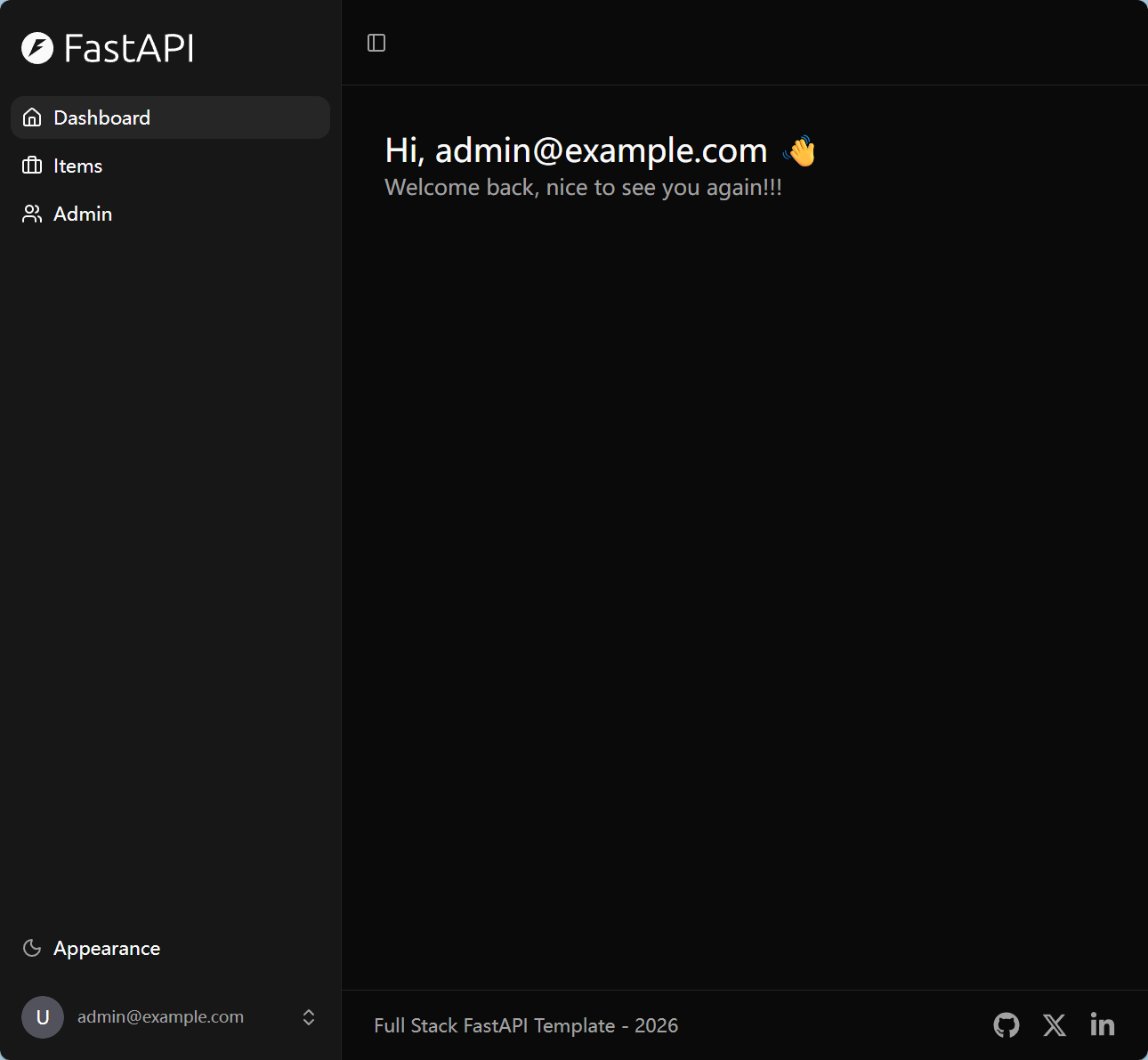

如果你像我一样没怎么用过docker,不了解前后端之间具体如何工作,而只是照着模板项目的md边问ai边运行项目的话,看到这个界面肯定是懵的

当我尝试问ai时,它直接告诉我有这么多端口

即便我大致看过了fastapi的教程,稍微了解了一点数据库知识,自以为可以开始做项目了,这些界面直接将我打回原形,显然工程上的前后端还是很难懂的,不是一个普通学生可以轻易学会的

于是我开始翻阅项目文档,并发现项目中基本没写原理,写的都是运行方法,问了问ai,给了以下回答.

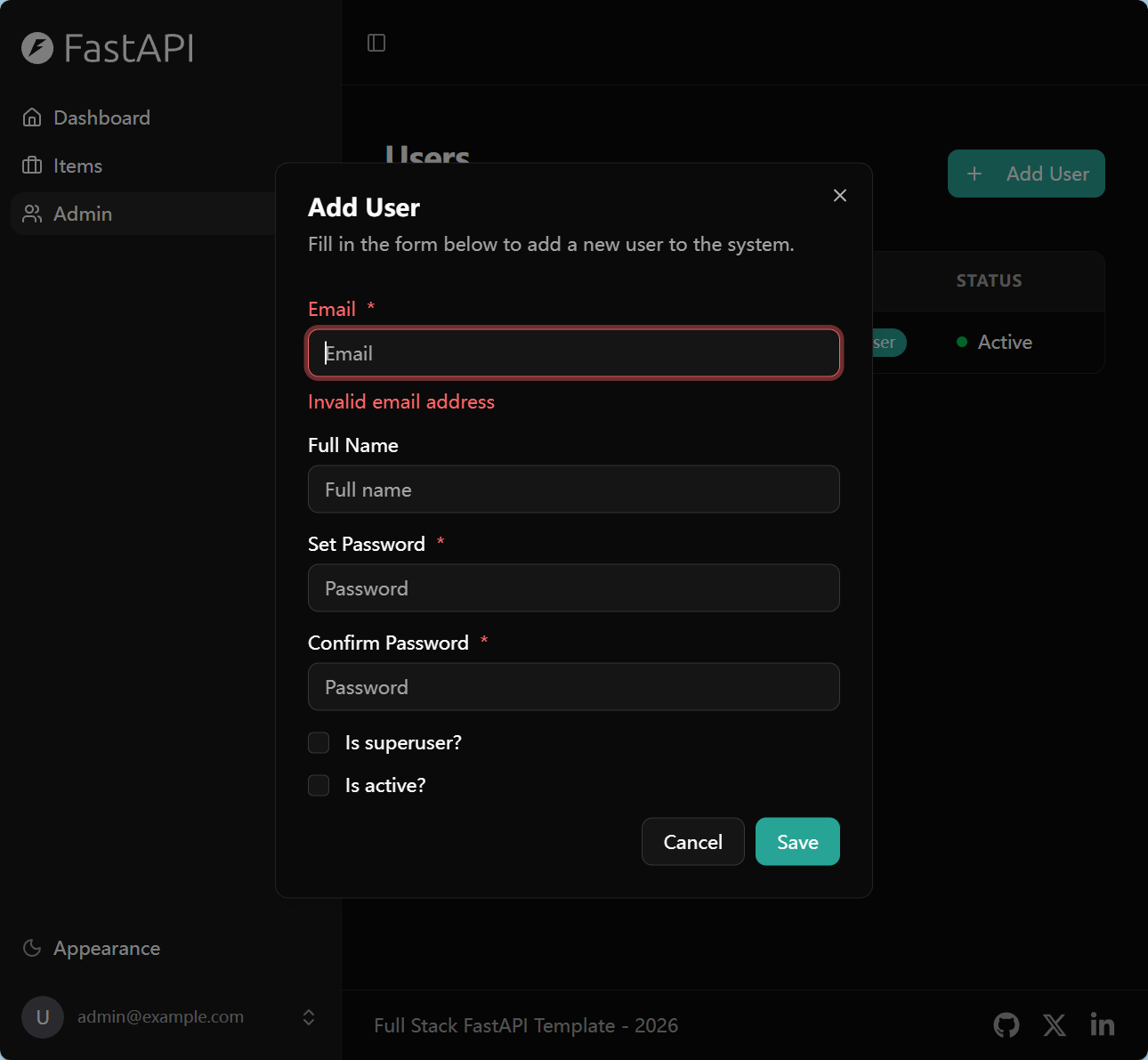

这个模板(full-stack-fastapi-template)针对生产级最佳实践设计,不是为零基础初学者写的。官方文档和 README 假设你已有 FastAPI、Docker、React 基础知识。GitHub discussion #1115 明确说:not beginner friendly,目标是给有经验开发者提供起点。

也正是如此,如果我连这个项目都可以驾驭的话,那么之后做任何前后端应该都不会有任何问题了,下面是我的学习历程前置知识 :了解基本的python面向对象语法,详细的研究过fastapi官方文档

参考

Docker 把应用程序及其依赖,打包在 image 文件里面。只有通过这个文件,才能生成 Docker 容器。image 文件可以看作是容器的模板。Docker 根据 image 文件生成容器的实例。同一个 image 文件,可以生成多个同时运行的容器实例。

也就是说image是一个集成了组件和应用程序的zip,container则可以看成是解压缩,将zip解压成对应平台的适配版本

Docker通过读取Dockerfile中的指令来构建镜像。Dockerfile是一个文本文件,其中包含构建源代码的指令

1 2 3 4 5 FROM node:8.4 COPY . /app WORKDIR /app RUN npm install --registry=https://registry.npm.taobao.org EXPOSE 3000

FROM node:8.4:该 image 文件继承官方的 node image,冒号表示标签,这里标签是8.4,即8.4版本的 node。

有了 Dockerfile 文件以后,就可以使用docker image build命令创建 image 文件了。docker image build -t koa-demo

docker build 实际上执行的是docker image build

可以看出来实际上dockerfile就是像bat一样把所有环境依赖的命令都写在一个文件里一次运行而已,而image就是用docker build构建出来的

仔细一看模板项目目录下有一个dockerfile.playwrite文件,点进去一看发现和一般的dockerfile没什么区别,playwrite实际上就是一个模拟服务器的测试文件,并不会用来制作真正的镜像

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 FROM mcr.microsoft.com/playwright:v1.58.0 -nobleWORKDIR /app RUN apt-get update && apt-get install -y unzip \ && rm -rf /var/lib/apt/lists/* RUN curl -fsSL https://bun.sh/install | bash ENV PATH="/root/.bun/bin:$PATH" COPY package.json bun.lock /app/ COPY frontend/package.json /app/frontend/ WORKDIR /app/frontend RUN bun install COPY ./frontend /app/frontend ARG VITE_API_URL

1 docker run [OPTIONS ] IMAGE[:TAG |@DIGEST ] [COMMAND ] [ARG... ]

事实上docker run对应的实际命令是docker container runeg1

1 2 3 $ docker container run -p 8000:3000 -it koa-demo /bin/bash $ docker container run -p 8000:3000 -it koa-demo:0.0.1 /bin/bash

上面命令的各个参数含义如下:

如果一切正常,运行上面的命令以后,就会返回一个命令行提示符。root@66d80f4aaf1e:/app#

root :当前登录的用户标识本质 :Linux 系统中的超级管理员(Superuser)。root 风险极高。

@ :连接符本质 :用于分隔 用户名 与 主机名 。

66d80f4aaf1e :主机名(Hostname)本质 :在 Docker 环境中,通常是 容器 ID 的前 12 位短哈希 。

:/app :当前工作目录(Current Working Directory)本质 :位于根目录下的 app 文件夹。

# :身份状态位本质 :

# 表示 root(超级用户) $ 表示 普通用户

eg2

1 2 3 4 5 6 7 8 9 10 11 12 13 PS C:\Users\...> docker run hello-world Hello from Docker! This message shows that your installation appears to be working correctly. To generate this message, Docker took the following steps: 1 . The Docker client contacted the Docker daemon. 2 . The Docker daemon pulled the "hello-world" image from the Docker Hub. (amd64) 3 . The Docker daemon created a new container from that image which runs the executable that produces the output you are currently reading. 4 . The Docker daemon streamed that output to the Docker client, which sent it to your terminal.

通过这个hello-world image 可以看到docker run 的执行过程

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 services: db: image: postgres:18 restart: always healthcheck: test: ["CMD-SHELL" , "pg_isready -U ${POSTGRES_USER} -d ${POSTGRES_DB}" ] interval: 10s retries: 5 start_period: 30s timeout: 10s volumes: - app-db-data:/var/lib/postgresql/data/pgdata env_file: - .env environment: - PGDATA=/var/lib/postgresql/data/pgdata - POSTGRES_PASSWORD=${POSTGRES_PASSWORD?Variable not set} - POSTGRES_USER=${POSTGRES_USER?Variable not set} - POSTGRES_DB=${POSTGRES_DB?Variable not set}

上面的文件就是docker compose 唯一参照的compose.yml,在cmd中输入docker compose up后,docker将会按照yml所写的拉取镜像,配置环境,解决依赖,启动所有项目

eg

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 mysql: image: mysql:5.7 environment: - MYSQL_ROOT_PASSWORD=123456 - MYSQL_DATABASE=wordpress web: image: wordpress links: - mysql environment: - WORDPRESS_DB_PASSWORD=123456 ports: - "127.0.0.3:8080:80" working_dir: /var/www/html volumes: - wordpress:/var/www/html

image: 镜像依赖

environment: 实际上就是和普通操作系统类似的环境变量

working_dir: 在 Linux 操作系统中,任何进程运行都必须有一个关联的目录。如果你不指定,它通常默认在根目录 /。设置 working_dir 相当于在执行所有后续操作前,先在容器内部运行了一次 cd 命令。

默认情况下容器的所有数据都是临时存储的,volumes则帮你把需要保留的数据存入了宿主机

A volume’s contents exist outside the lifecycle of a given container. When a container is destroyed, the writable layer is destroyed with it. Using a volume ensures that the data is persisted even if the container using it is removed.

1 2 3 4 5 6 7 services: frontend: image: node:lts volumes: - myapp:/home/node/app volumes: myapp:

volumes: myapp::告诉docker engine 在宿主机的硬盘下开辟一块由docker控制的空间叫做myappvolumes: - myapp:/home/node/app: 将myapp分给/home/node/app第一次运行docker compose up时,docker会将对应目录下的文件copy到myapp里,之后container读写该目录都是直接操作myapp volume,而其他目录不受任何影响

The watch attribute automatically updates and previews your running Compose services as you edit and save your code. For many projects, this enables a hands-off development workflow once Compose is running, as services automatically update themselves when you save your work.

也就是说做到了热更新,修改代码后不必重启容器,而传统的docker compose up就做不到这点

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 services: db: image: postgres:18 restart: always healthcheck: test: ["CMD-SHELL" , "pg_isready -U ${POSTGRES_USER} -d ${POSTGRES_DB}" ] interval: 10s retries: 5 start_period: 30s timeout: 10s volumes: - app-db-data:/var/lib/postgresql/data/pgdata env_file: - .env environment: - PGDATA=/var/lib/postgresql/data/pgdata - POSTGRES_PASSWORD=${POSTGRES_PASSWORD?Variable not set} - POSTGRES_USER=${POSTGRES_USER?Variable not set} - POSTGRES_DB=${POSTGRES_DB?Variable not set} adminer: image: adminer restart: always networks: - traefik-public - default depends_on: - db environment: - ADMINER_DESIGN=pepa-linha-dark labels: - traefik.enable=true - traefik.docker.network=traefik-public - traefik.constraint-label=traefik-public - traefik.http.routers.${STACK_NAME?Variable not set}-adminer-http.rule=Host(`adminer.${DOMAIN?Variable not set}`) - traefik.http.routers.${STACK_NAME?Variable not set}-adminer-http.entrypoints=http - traefik.http.routers.${STACK_NAME?Variable not set}-adminer-http.middlewares=https-redirect - traefik.http.routers.${STACK_NAME?Variable not set}-adminer-https.rule=Host(`adminer.${DOMAIN?Variable not set}`) - traefik.http.routers.${STACK_NAME?Variable not set}-adminer-https.entrypoints=https - traefik.http.routers.${STACK_NAME?Variable not set}-adminer-https.tls=true - traefik.http.routers.${STACK_NAME?Variable not set}-adminer-https.tls.certresolver=le - traefik.http.services.${STACK_NAME?Variable not set}-adminer.loadbalancer.server.port=8080 prestart: image: '${DOCKER_IMAGE_BACKEND?Variable not set}:${TAG-latest}' build: context: . dockerfile: backend/Dockerfile networks: - traefik-public - default depends_on: db: condition: service_healthy restart: true command: bash scripts/prestart.sh env_file: - .env environment: - DOMAIN=${DOMAIN} - FRONTEND_HOST=${FRONTEND_HOST?Variable not set} - ENVIRONMENT=${ENVIRONMENT} - BACKEND_CORS_ORIGINS=${BACKEND_CORS_ORIGINS} - SECRET_KEY=${SECRET_KEY?Variable not set} - FIRST_SUPERUSER=${FIRST_SUPERUSER?Variable not set} - FIRST_SUPERUSER_PASSWORD=${FIRST_SUPERUSER_PASSWORD?Variable not set} - SMTP_HOST=${SMTP_HOST} - SMTP_USER=${SMTP_USER} - SMTP_PASSWORD=${SMTP_PASSWORD} - EMAILS_FROM_EMAIL=${EMAILS_FROM_EMAIL} - POSTGRES_SERVER=db - POSTGRES_PORT=${POSTGRES_PORT} - POSTGRES_DB=${POSTGRES_DB} - POSTGRES_USER=${POSTGRES_USER?Variable not set} - POSTGRES_PASSWORD=${POSTGRES_PASSWORD?Variable not set} - SENTRY_DSN=${SENTRY_DSN} backend: image: '${DOCKER_IMAGE_BACKEND?Variable not set}:${TAG-latest}' restart: always networks: - traefik-public - default depends_on: db: condition: service_healthy restart: true prestart: condition: service_completed_successfully env_file: - .env environment: - DOMAIN=${DOMAIN} - FRONTEND_HOST=${FRONTEND_HOST?Variable not set} - ENVIRONMENT=${ENVIRONMENT} - BACKEND_CORS_ORIGINS=${BACKEND_CORS_ORIGINS} - SECRET_KEY=${SECRET_KEY?Variable not set} - FIRST_SUPERUSER=${FIRST_SUPERUSER?Variable not set} - FIRST_SUPERUSER_PASSWORD=${FIRST_SUPERUSER_PASSWORD?Variable not set} - SMTP_HOST=${SMTP_HOST} - SMTP_USER=${SMTP_USER} - SMTP_PASSWORD=${SMTP_PASSWORD} - EMAILS_FROM_EMAIL=${EMAILS_FROM_EMAIL} - POSTGRES_SERVER=db - POSTGRES_PORT=${POSTGRES_PORT} - POSTGRES_DB=${POSTGRES_DB} - POSTGRES_USER=${POSTGRES_USER?Variable not set} - POSTGRES_PASSWORD=${POSTGRES_PASSWORD?Variable not set} - SENTRY_DSN=${SENTRY_DSN} healthcheck: test: ["CMD" , "curl" , "-f" , "http://localhost:8000/api/v1/utils/health-check/" ] interval: 10s timeout: 5s retries: 5 build: context: . dockerfile: backend/Dockerfile labels: - traefik.enable=true - traefik.docker.network=traefik-public - traefik.constraint-label=traefik-public - traefik.http.services.${STACK_NAME?Variable not set}-backend.loadbalancer.server.port=8000 - traefik.http.routers.${STACK_NAME?Variable not set}-backend-http.rule=Host(`api.${DOMAIN?Variable not set}`) - traefik.http.routers.${STACK_NAME?Variable not set}-backend-http.entrypoints=http - traefik.http.routers.${STACK_NAME?Variable not set}-backend-https.rule=Host(`api.${DOMAIN?Variable not set}`) - traefik.http.routers.${STACK_NAME?Variable not set}-backend-https.entrypoints=https - traefik.http.routers.${STACK_NAME?Variable not set}-backend-https.tls=true - traefik.http.routers.${STACK_NAME?Variable not set}-backend-https.tls.certresolver=le - traefik.http.routers.${STACK_NAME?Variable not set}-backend-http.middlewares=https-redirect frontend: image: '${DOCKER_IMAGE_FRONTEND?Variable not set}:${TAG-latest}' restart: always networks: - traefik-public - default build: context: . dockerfile: frontend/Dockerfile args: - VITE_API_URL=https://api.${DOMAIN?Variable not set} - NODE_ENV=production labels: - traefik.enable=true - traefik.docker.network=traefik-public - traefik.constraint-label=traefik-public - traefik.http.services.${STACK_NAME?Variable not set}-frontend.loadbalancer.server.port=80 - traefik.http.routers.${STACK_NAME?Variable not set}-frontend-http.rule=Host(`dashboard.${DOMAIN?Variable not set}`) - traefik.http.routers.${STACK_NAME?Variable not set}-frontend-http.entrypoints=http - traefik.http.routers.${STACK_NAME?Variable not set}-frontend-https.rule=Host(`dashboard.${DOMAIN?Variable not set}`) - traefik.http.routers.${STACK_NAME?Variable not set}-frontend-https.entrypoints=https - traefik.http.routers.${STACK_NAME?Variable not set}-frontend-https.tls=true - traefik.http.routers.${STACK_NAME?Variable not set}-frontend-https.tls.certresolver=le - traefik.http.routers.${STACK_NAME?Variable not set}-frontend-http.middlewares=https-redirect volumes: app-db-data: networks: traefik-public: external: true

这是模板项目中的compose.yml,无论怎么看都很复杂

healthcheck: 检查容器是否正常工作

test: 检查工具和方法

pg_isready:数据库专用探测工具,检查数据库是否能接受连接。

curl: 模拟用户访问后端api

interval: 每10s检查一次

retries: 连续失败五次就报错

start_period: 容器启动30s后开始检查

restart: 当容器停止运行时何时重启

no: 默认值,表示不再重启

always: 一旦停止docker就会尝试重启

prestart: 使用了与backend相同的image,做一些backend启动前要完成的准备工作后就停止运行

build: 实际上就是docker build,不再去pull image,而是本地构建镜像,如果与image关键字同时出现,就会先检查是否已有image,如果没有才构建新image

context: 构建为image的对象

辨析: 之所以要将本地文件构建为image再装载到容器里再运行容器里的本地文件,实际上是为了利用docker的端口,环境设置方便进行统一管理.

depends_on: 当depends_on下的容器运行好后才会开始运行该容器,确保了逻辑上的顺序不乱

labels: 面向外部管理工具如traefik,提供对应的指令

networks: 解决容器之间的相互通信

ports: 映射到宿主机的通信端口

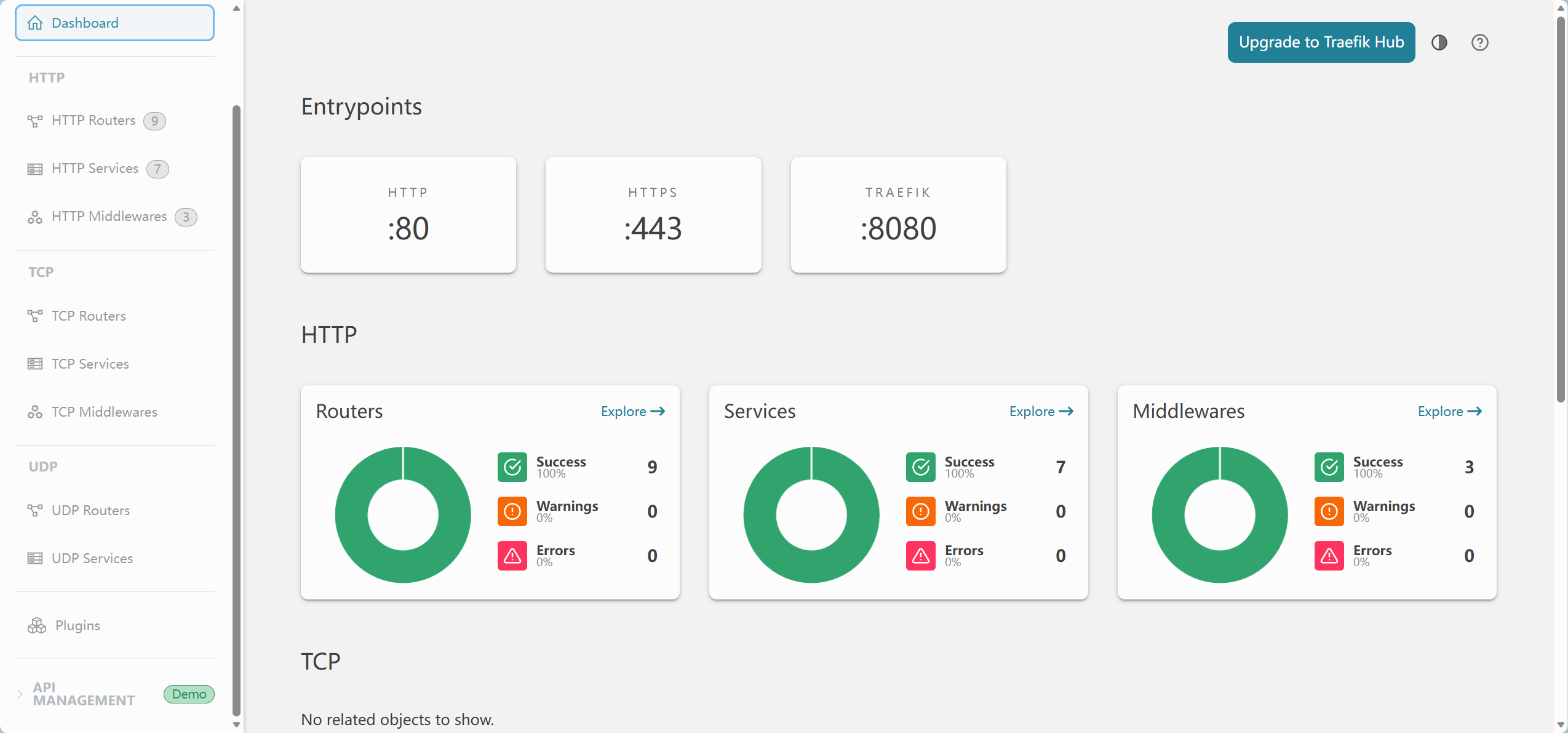

Traefik: 反向代理路由

shell script是功能强大的命令行语言,可以用于Bourne Again Shell(/bin/bash), Shell(/usr/bin/sh或/bin/sh)等linux的shell中.

由于docker实际上是linux进程,故也可以在配置对应的sh文件并在compose.yml中写出来,相当于执行了bat脚本

command: bash scripts/prestart.sh

1 2 3 4 5 6 7 8 9 10 11 12 13 #! /usr/bin/env bash set -eset -xpython app/backend_pre_start.py alembic upgrade head python app/initial_data.py

#! 告诉系统其后路径所指定的程序即是解释此脚本文件的 Shell 程序。

在一般情况下,人们并不区分 Bourne Shell 和 Bourne Again Shell,所以,像 #!/bin/sh,它同样也可以改为 #!/bin/bash。

由于课业原因,暂时不深入了解,若还要用到sh会专门写新博客

官网的口号如下:Database management in a single PHP file

下面先看一下.env文件

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 DOMAIN=localhost FRONTEND_HOST=http://localhost:5173 ENVIRONMENT=local PROJECT_NAME="Full Stack FastAPI Project" STACK_NAME=full-stack-fastapi-project BACKEND_CORS_ORIGINS="http://localhost,http://localhost:5173,https://localhost,https://localhost:5173,http://localhost.tiangolo.com" SECRET_KEY=changethis FIRST_SUPERUSER=admin@example.com FIRST_SUPERUSER_PASSWORD=changethis SMTP_HOST= SMTP_USER= SMTP_PASSWORD= EMAILS_FROM_EMAIL=info@example.com SMTP_TLS=True SMTP_SSL=False SMTP_PORT=587 POSTGRES_SERVER=localhost POSTGRES_PORT=5432 POSTGRES_DB=app POSTGRES_USER=postgres POSTGRES_PASSWORD=changethis SENTRY_DSN= DOCKER_IMAGE_BACKEND=backend DOCKER_IMAGE_FRONTEND=frontend

前端和数据库有着不同的管理员账户,这样更加安全,隔离了不同进程

Emails是后端程序的邮件设置,比如要发送验证邮件和找回密码邮件

SMTP_HOST:邮件服务器的物理地址

SMTP_USER / SMTP_PASSWORD:对应邮件服务器那里的凭证账户

SMTP_PORT (587):通信端口

SMTP_TLS=True:开启安全传输。它会在发信前先建立一个加密通道,防止你的账号密码和邮件内容在互联网上被截获。

SMTP_SSL=False:这是另一种老式的加密方式

EMAILS_FROM_EMAIL:用户收到邮件时,看到的“发件人”是谁。

物理限制:这个地址通常必须和你登录 SMTP_USER 的账号一致,否则邮件服务器会认为你在伪造身份(欺骗),从而拒绝发信

输入对应账户后登录adminer,可以看到就是一个普通的数据库管理界面

随便做些修改

之后关闭容器,重新运行docker compose watch,结果发现prestart失败,原来是一不小心在数据库里改了版本号.这也侧面说明数据库的修改在本地文件里存储了

1 2 3 prestart-1 | INFO [alembic.runtime.migration ] Will assume transactional DDL. prestart-1 | ERROR [alembic.util.messaging ] Can't locate revision identified by ' 1 eddcfe73d4b96aed0830bb587ece8d9' prestart-1 | FAILED: Can' t locate revision identified by '1eddcfe73d4b96aed0830bb587ece8d9'

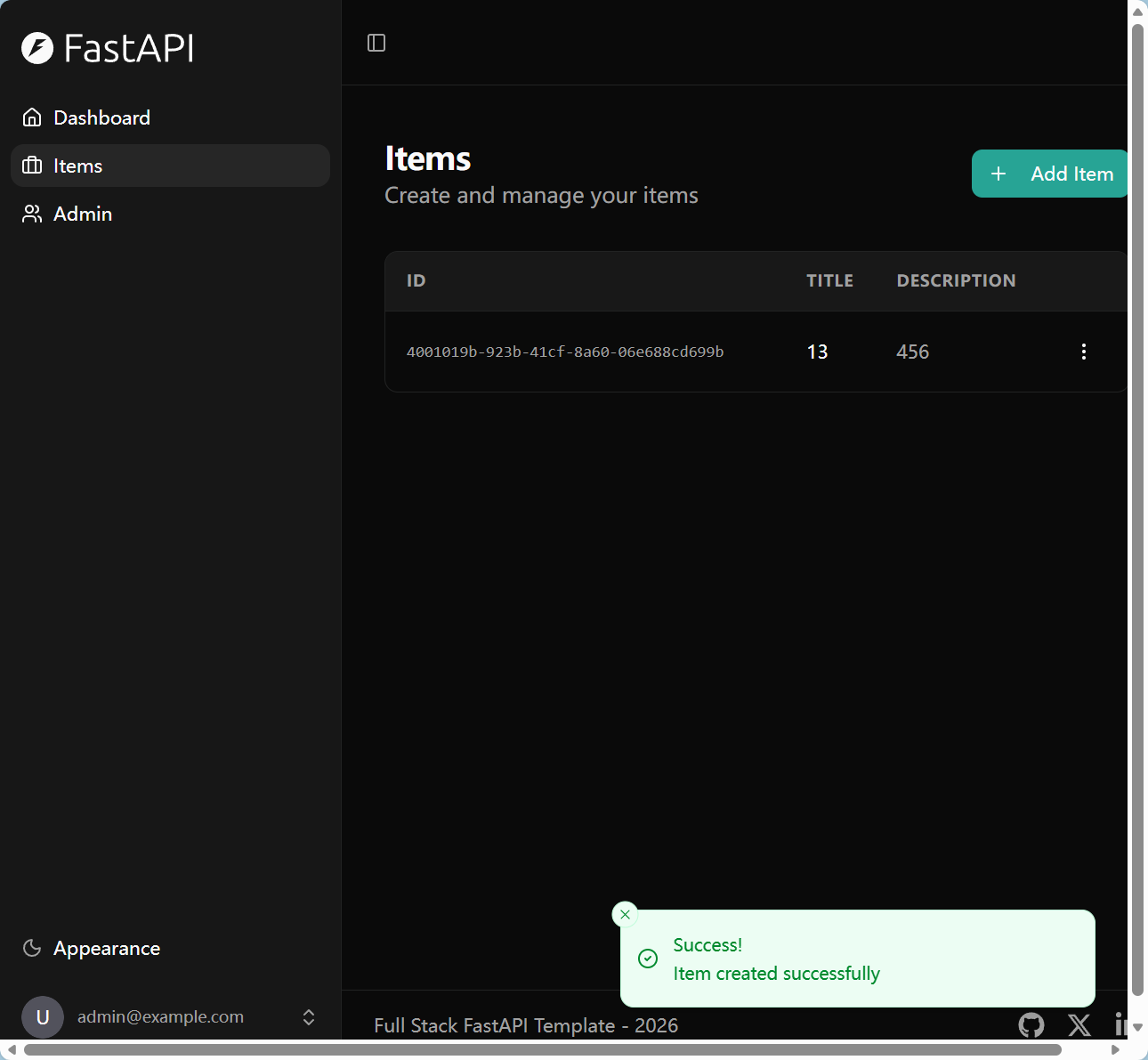

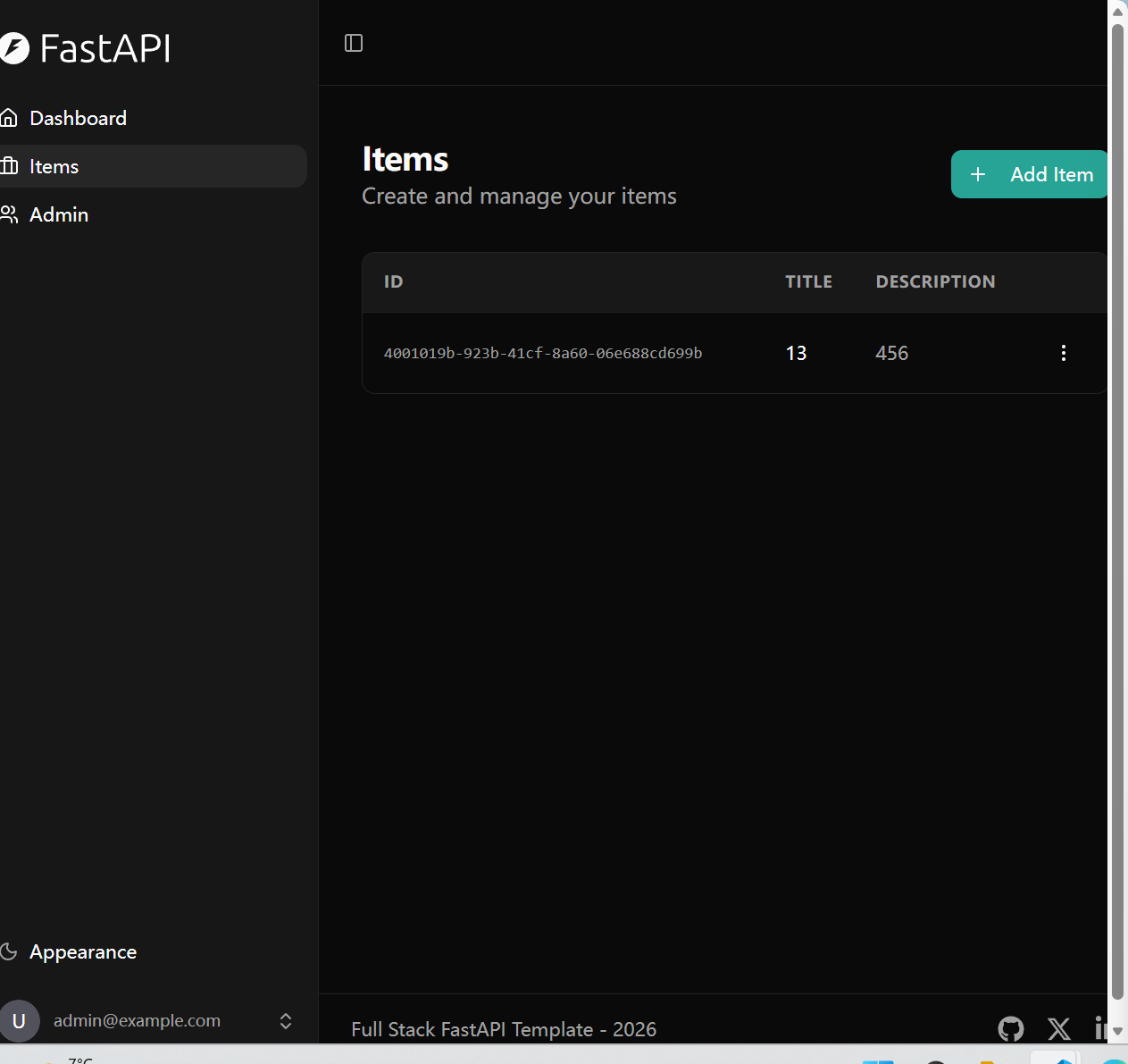

重新登入adminer页面修改表,再次运行成功,看一下后端页面,发现修改都还保留着.

现在再看一遍compose.yml,感觉上就亲切了许多volumes:- app-db-data:/var/lib/postgresql/data/pgdata保证了只有数据库的修改会保存在本地,services中不同容器通过networks中写的- traefik-public - default进行通信,environment:写明了所需的众多环境变量并从.env文件中读取.

这样看来docker确实非常方便,成功的整合了这么多进程并保证彼此之间相互通信而不出错.

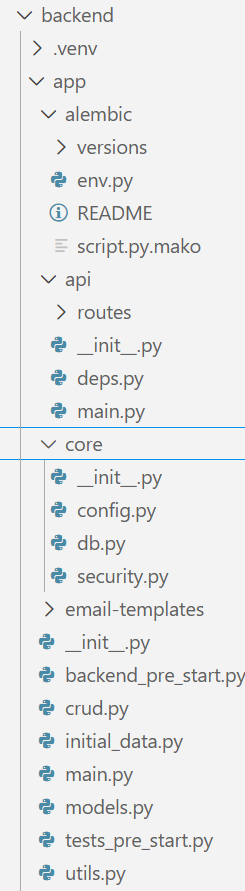

实际点进去一看文件并没有多到吓人,真正重要的只有api,core和根目录app下的py文件,下面开始逐文件分析

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 import loggingfrom sqlalchemy import Enginefrom sqlmodel import Session, selectfrom tenacity import after_log, before_log, retry, stop_after_attempt, wait_fixedfrom app.core.db import enginelogging.basicConfig(level=logging.INFO) logger = logging.getLogger(__name__) max_tries = 60 * 5 wait_seconds = 1 @retry( stop=stop_after_attempt(max_tries ), wait=wait_fixed(wait_seconds ), before=before_log(logger, logging.INFO ), after=after_log(logger, logging.WARN ), def init (db_engine: Engine ) -> None : try : with Session(db_engine) as session: session.exec (select(1 )) except Exception as e: logger.error(e) raise e def main () -> None : logger.info("Initializing service" ) init(engine) logger.info("Service finished initializing" ) if __name__ == "__main__" : main()

首先有一个logging模块,看一下菜鸟教程 ,大致浏览一下发现是负责日志调试的.

1 2 3 4 5 logging.debug("这是一条调试信息" ) logging.info("这是一条普通信息" ) logging.warning("这是一条警告信息" ) logging.error("这是一条错误信息" ) logging.critical("这是一条严重错误信息" )

了解到这个程度就可以了.

然后是一个retry语法糖,看一下retry教程 ,东西也太少了,转到tenacity教程 ,详细多了.

不过稍微看一下代码就可以知道,这里是在时间期限之前每隔一秒钟就重新运行一遍修饰的init函数,然后init函数是负责检查数据库的.也就是说在5min内反复连接数据库,一有报错就弹出来,保证后续能正常操控数据库

值得一提的是从core.db导入的engine直接用来作为连接数据库的engine,也就侧面说明了sqlmodel中多个文件都共用同一个engine.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 import uuidfrom typing import Any from sqlmodel import Session, selectfrom app.core.security import get_password_hash, verify_passwordfrom app.models import Item, ItemCreate, User, UserCreate, UserUpdatedef create_user (*, session: Session, user_create: UserCreate ) -> User: db_obj = User.model_validate( user_create, update={"hashed_password" : get_password_hash(user_create.password)} ) session.add(db_obj) session.commit() session.refresh(db_obj) return db_obj def update_user (*, session: Session, db_user: User, user_in: UserUpdate ) -> Any : user_data = user_in.model_dump(exclude_unset=True ) extra_data = {} if "password" in user_data: password = user_data["password" ] hashed_password = get_password_hash(password) extra_data["hashed_password" ] = hashed_password db_user.sqlmodel_update(user_data, update=extra_data) session.add(db_user) session.commit() session.refresh(db_user) return db_user def get_user_by_email (*, session: Session, email: str ) -> User | None : statement = select(User).where(User.email == email) session_user = session.exec (statement).first() return session_user DUMMY_HASH = "$argon2id$v=19$m=65536,t=3,p=4$MjQyZWE1MzBjYjJlZTI0Yw$YTU4NGM5ZTZmYjE2NzZlZjY0ZWY3ZGRkY2U2OWFjNjk" def authenticate (*, session: Session, email: str , password: str ) -> User | None : db_user = get_user_by_email(session=session, email=email) if not db_user: verify_password(password, DUMMY_HASH) return None verified, updated_password_hash = verify_password(password, db_user.hashed_password) if not verified: return None if updated_password_hash: db_user.hashed_password = updated_password_hash session.add(db_user) session.commit() session.refresh(db_user) return db_user def create_item (*, session: Session, item_in: ItemCreate, owner_id: uuid.UUID ) -> Item: db_item = Item.model_validate(item_in, update={"owner_id" : owner_id}) session.add(db_item) session.commit() session.refresh(db_item) return db_item

wiki:In computer programming, create, read, update, and delete (CRUD) are the four basic operations (actions) of persistent storage.[1] CRUD is also sometimes used to describe user interface conventions that facilitate viewing, searching, and changing information using computer-based forms and reports.

我一直在想到底得是多离谱的人才能把这么简单的几个操作包装成一个让人看不懂的缩略词

如文件名所述,这个文件是用来操作数据库的,先看导入的uuid.uuid

1 2 3 4 5 import uuida = uuid.uuid4() print (a)

简单来说uuid就是从本机中读取128位的随机数,并进行特定的改写,由于数据量极其庞大,故不太可能重复.

dummy hash

其实看一遍文件便大概清楚大多数代码都不太需要直接用fastapi,没有async def,也没有@app.post()这样的语法糖,这些用法只在routes模块中会出现,这样严格的工程划分非常优美.

initial_data.py可以跳过,仅仅是执行了core.db中的init_db而已

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 import sentry_sdkfrom fastapi import FastAPIfrom fastapi.routing import APIRoutefrom starlette.middleware.cors import CORSMiddlewarefrom app.api.main import api_routerfrom app.core.config import settingsdef custom_generate_unique_id (route: APIRoute ) -> str : return f"{route.tags[0 ]} -{route.name} " if settings.SENTRY_DSN and settings.ENVIRONMENT != "local" : sentry_sdk.init(dsn=str (settings.SENTRY_DSN), enable_tracing=True ) app = FastAPI( title=settings.PROJECT_NAME, openapi_url=f"{settings.API_V1_STR} /openapi.json" , generate_unique_id_function=custom_generate_unique_id, ) if settings.all_cors_origins: app.add_middleware( CORSMiddleware, allow_origins=settings.all_cors_origins, allow_credentials=True , allow_methods=["*" ], allow_headers=["*" ], ) app.include_router(api_router, prefix=settings.API_V1_STR)

import sentry_sdk

简单的说,是一个远程的异常收集系统,将服务器的异常发送到sentry控制台以便远程分析

1 2 3 4 5 6 7 8 if settings.all_cors_origins: app.add_middleware( CORSMiddleware, allow_origins=settings.all_cors_origins, allow_credentials=True , allow_methods=["*" ], allow_headers=["*" ], )

这个部分很难看懂,先看一下库中的源码

1 2 3 4 5 6 7 8 9 10 11 from starlette.middleware import Middleware, _MiddlewareFactory def add_middleware ( self, middleware_class: _MiddlewareFactory[P], *args: P.args, **kwargs: P.kwargs, ) -> None : if self .middleware_stack is not None : raise RuntimeError("Cannot add middleware after an application has started" ) self .user_middleware.insert(0 , Middleware(middleware_class, *args, **kwargs))

显然还是看不懂,先看一下starlette的官方文档 .

介绍如下:

再看一下ASGI的文档 😅

再看一下WSGI的文档 😅

好吧,我之后再写一篇博客来详细分析这个asgi和wsgi🙂

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 import uuidfrom datetime import datetime, timezonefrom pydantic import EmailStrfrom sqlalchemy import DateTimefrom sqlmodel import Field, Relationship, SQLModeldef get_datetime_utc () -> datetime: return datetime.now(timezone.utc) class UserBase (SQLModel ): email: EmailStr = Field(unique=True , index=True , max_length=255 ) is_active: bool = True is_superuser: bool = False full_name: str | None = Field(default=None , max_length=255 ) class UserCreate (UserBase ): password: str = Field(min_length=8 , max_length=128 ) class UserRegister (SQLModel ): email: EmailStr = Field(max_length=255 ) password: str = Field(min_length=8 , max_length=128 ) full_name: str | None = Field(default=None , max_length=255 ) class UserUpdate (UserBase ): email: EmailStr | None = Field(default=None , max_length=255 ) password: str | None = Field(default=None , min_length=8 , max_length=128 ) class UserUpdateMe (SQLModel ): full_name: str | None = Field(default=None , max_length=255 ) email: EmailStr | None = Field(default=None , max_length=255 ) class UpdatePassword (SQLModel ): current_password: str = Field(min_length=8 , max_length=128 ) new_password: str = Field(min_length=8 , max_length=128 ) class User (UserBase, table=True ): id : uuid.UUID = Field(default_factory=uuid.uuid4, primary_key=True ) hashed_password: str created_at: datetime | None = Field( default_factory=get_datetime_utc, sa_type=DateTime(timezone=True ), ) items: list ["Item" ] = Relationship(back_populates="owner" , cascade_delete=True ) class UserPublic (UserBase ): id : uuid.UUID created_at: datetime | None = None class UsersPublic (SQLModel ): data: list [UserPublic] count: int class ItemBase (SQLModel ): title: str = Field(min_length=1 , max_length=255 ) description: str | None = Field(default=None , max_length=255 ) class ItemCreate (ItemBase ): pass class ItemUpdate (ItemBase ): title: str | None = Field(default=None , min_length=1 , max_length=255 ) class Item (ItemBase, table=True ): id : uuid.UUID = Field(default_factory=uuid.uuid4, primary_key=True ) created_at: datetime | None = Field( default_factory=get_datetime_utc, sa_type=DateTime(timezone=True ), ) owner_id: uuid.UUID = Field( foreign_key="user.id" , nullable=False , ondelete="CASCADE" ) owner: User | None = Relationship(back_populates="items" ) class ItemPublic (ItemBase ): id : uuid.UUID owner_id: uuid.UUID created_at: datetime | None = None class ItemsPublic (SQLModel ): data: list [ItemPublic] count: int class Message (SQLModel ): message: str class Token (SQLModel ): access_token: str token_type: str = "bearer" class TokenPayload (SQLModel ): sub: str | None = None class NewPassword (SQLModel ): token: str new_password: str = Field(min_length=8 , max_length=128 )

这个类的划分看上去就很nb,没有真实开发经验的我根本不会这么划分.

utils.py涉及主要是邮件发送相关的工具函数,暂时放一放

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 import secretsimport warningsfrom typing import Annotated, Any , Literal from pydantic import ( AnyUrl, BeforeValidator, EmailStr, HttpUrl, PostgresDsn, computed_field, model_validator, ) from pydantic_settings import BaseSettings, SettingsConfigDictfrom typing_extensions import Selfdef parse_cors (v: Any ) -> list [str ] | str : if isinstance (v, str ) and not v.startswith("[" ): return [i.strip() for i in v.split("," ) if i.strip()] elif isinstance (v, list | str ): return v raise ValueError(v) class Settings (BaseSettings ): model_config = SettingsConfigDict( env_file="../.env" , env_ignore_empty=True , extra="ignore" , ) API_V1_STR: str = "/api/v1" SECRET_KEY: str = secrets.token_urlsafe(32 ) ACCESS_TOKEN_EXPIRE_MINUTES: int = 60 * 24 * 8 FRONTEND_HOST: str = "http://localhost:5173" ENVIRONMENT: Literal ["local" , "staging" , "production" ] = "local" BACKEND_CORS_ORIGINS: Annotated[ list [AnyUrl] | str , BeforeValidator(parse_cors) ] = [] @computed_field @property def all_cors_origins (self ) -> list [str ]: return [str (origin).rstrip("/" ) for origin in self .BACKEND_CORS_ORIGINS] + [ self .FRONTEND_HOST ] PROJECT_NAME: str SENTRY_DSN: HttpUrl | None = None POSTGRES_SERVER: str POSTGRES_PORT: int = 5432 POSTGRES_USER: str POSTGRES_PASSWORD: str = "" POSTGRES_DB: str = "" @computed_field @property def SQLALCHEMY_DATABASE_URI (self ) -> PostgresDsn: return PostgresDsn.build( scheme="postgresql+psycopg" , username=self .POSTGRES_USER, password=self .POSTGRES_PASSWORD, host=self .POSTGRES_SERVER, port=self .POSTGRES_PORT, path=self .POSTGRES_DB, ) SMTP_TLS: bool = True SMTP_SSL: bool = False SMTP_PORT: int = 587 SMTP_HOST: str | None = None SMTP_USER: str | None = None SMTP_PASSWORD: str | None = None EMAILS_FROM_EMAIL: EmailStr | None = None EMAILS_FROM_NAME: str | None = None @model_validator(mode="after" ) def _set_default_emails_from (self ) -> Self: if not self .EMAILS_FROM_NAME: self .EMAILS_FROM_NAME = self .PROJECT_NAME return self EMAIL_RESET_TOKEN_EXPIRE_HOURS: int = 48 @computed_field @property def emails_enabled (self ) -> bool : return bool (self .SMTP_HOST and self .EMAILS_FROM_EMAIL) EMAIL_TEST_USER: EmailStr = "test@example.com" FIRST_SUPERUSER: EmailStr FIRST_SUPERUSER_PASSWORD: str def _check_default_secret (self, var_name: str , value: str | None ) -> None : if value == "changethis" : message = ( f'The value of {var_name} is "changethis", ' "for security, please change it, at least for deployments." ) if self .ENVIRONMENT == "local" : warnings.warn(message, stacklevel=1 ) else : raise ValueError(message) @model_validator(mode="after" ) def _enforce_non_default_secrets (self ) -> Self: self ._check_default_secret("SECRET_KEY" , self .SECRET_KEY) self ._check_default_secret("POSTGRES_PASSWORD" , self .POSTGRES_PASSWORD) self ._check_default_secret( "FIRST_SUPERUSER_PASSWORD" , self .FIRST_SUPERUSER_PASSWORD ) return self settings = Settings()

1 2 3 4 5 6 7 8 9 10 from pydantic_settings import BaseSettings, SettingsConfigDictclass Settings (BaseSettings ): model_config = SettingsConfigDict( env_file="../.env" , env_ignore_empty=True , extra="ignore" , )

BaseSettings: 继承自Basemodel,可以读取外部配文件

SettingsConfigDict

env_fie: 要读取的外部配置文件

env_ignore_empty: API_V1_STR: 这样的空字符变量不读取

extra: 'ignore’忽略配置文件中未在BaseSettings类中实例化的字符变量

1 2 3 4 5 6 7 8 9 10 11 @computed_field @property def SQLALCHEMY_DATABASE_URI (self ) -> PostgresDsn: return PostgresDsn.build( scheme="postgresql+psycopg" , username=self .POSTGRES_USER, password=self .POSTGRES_PASSWORD, host=self .POSTGRES_SERVER, port=self .POSTGRES_PORT, path=self .POSTGRES_DB, )

@computed_field: 表示这个方法在将其他字段全都读取完之后才能进行构造

@property: 让这个无参方法看上去与一个普通变量一样,可以直接写settings.SQLALCHEMY_DATABASE_URI而不是写settings.SQLALCHEMY_DATABASE_URI()

PostgresDsn,拼接后得到下列字符串:

一个是初始化管理员账户,另一个是设置几个密码工具,没什么可分析的

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 from collections.abc import Generatorfrom typing import Annotatedimport jwtfrom fastapi import Depends, HTTPException, statusfrom fastapi.security import OAuth2PasswordBearerfrom jwt.exceptions import InvalidTokenErrorfrom pydantic import ValidationErrorfrom sqlmodel import Sessionfrom app.core import securityfrom app.core.config import settingsfrom app.core.db import enginefrom app.models import TokenPayload, Userreusable_oauth2 = OAuth2PasswordBearer( tokenUrl=f"{settings.API_V1_STR} /login/access-token" ) def get_db () -> Generator[Session, None , None ]: with Session(engine) as session: yield session SessionDep = Annotated[Session, Depends(get_db)] TokenDep = Annotated[str , Depends(reusable_oauth2)] def get_current_user (session: SessionDep, token: TokenDep ) -> User: try : payload = jwt.decode( token, settings.SECRET_KEY, algorithms=[security.ALGORITHM] ) token_data = TokenPayload(**payload) except (InvalidTokenError, ValidationError): raise HTTPException( status_code=status.HTTP_403_FORBIDDEN, detail="Could not validate credentials" , ) user = session.get(User, token_data.sub) if not user: raise HTTPException(status_code=404 , detail="User not found" ) if not user.is_active: raise HTTPException(status_code=400 , detail="Inactive user" ) return user CurrentUser = Annotated[User, Depends(get_current_user)] def get_current_active_superuser (current_user: CurrentUser ) -> User: if not current_user.is_superuser: raise HTTPException( status_code=403 , detail="The user doesn't have enough privileges" ) return current_user

这个解析非常深入浅出,yield将函数变成一个一次执行一步的iterator,从而保证不会一次执行太多步导致内存出问题

iterator next () method (or passing it to the built-in function next()) return successive items in the stream. When no more data are available a StopIteration exception is raised instead. At this point, the iterator object is exhausted and any further calls to its next () method just raise StopIteration again.

1 2 3 def get_db () -> Generator[Session, None , None ]: with Session(engine) as session: yield session

官方文档 介绍如下:

A generator can be annotated using the generic type Generator[YieldType, SendType, ReturnType].

1 2 3 4 def infinite_stream (start: int ) -> Generator[int ]: while True : yield start start += 1

It is also possible to set these types explicitly:

1 2 3 4 def infinite_stream (start: int ) -> Generator[int , None , None ]: while True : yield start start += 1

所以模板项目中这一段代码只是在表示这个函数返回的是一个iterator对象而已😅

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 import uuidfrom typing import Any from fastapi import APIRouter, HTTPExceptionfrom sqlmodel import func, selectfrom app.api.deps import CurrentUser, SessionDepfrom app.models import Item, ItemCreate, ItemPublic, ItemsPublic, ItemUpdate, Messagerouter = APIRouter(prefix="/items" , tags=["items" ]) @router.get("/" , response_model=ItemsPublic def read_items ( session: SessionDep, current_user: CurrentUser, skip: int = 0 , limit: int = 100 Any : """ Retrieve items. """ if current_user.is_superuser: count_statement = select(func.count()).select_from(Item) count = session.exec (count_statement).one() statement = ( select(Item).order_by(Item.created_at.desc()).offset(skip).limit(limit) ) items = session.exec (statement).all () else : count_statement = ( select(func.count()) .select_from(Item) .where(Item.owner_id == current_user.id ) ) count = session.exec (count_statement).one() statement = ( select(Item) .where(Item.owner_id == current_user.id ) .order_by(Item.created_at.desc()) .offset(skip) .limit(limit) ) items = session.exec (statement).all () return ItemsPublic(data=items, count=count) @router.get("/{id}" , response_model=ItemPublic def read_item (session: SessionDep, current_user: CurrentUser, id : uuid.UUID ) -> Any : """ Get item by ID. """ item = session.get(Item, id ) if not item: raise HTTPException(status_code=404 , detail="Item not found" ) if not current_user.is_superuser and (item.owner_id != current_user.id ): raise HTTPException(status_code=403 , detail="Not enough permissions" ) return item @router.post("/" , response_model=ItemPublic def create_item ( *, session: SessionDep, current_user: CurrentUser, item_in: ItemCreate Any : """ Create new item. """ item = Item.model_validate(item_in, update={"owner_id" : current_user.id }) session.add(item) session.commit() session.refresh(item) return item @router.put("/{id}" , response_model=ItemPublic def update_item ( *, session: SessionDep, current_user: CurrentUser, id : uuid.UUID, item_in: ItemUpdate, Any : """ Update an item. """ item = session.get(Item, id ) if not item: raise HTTPException(status_code=404 , detail="Item not found" ) if not current_user.is_superuser and (item.owner_id != current_user.id ): raise HTTPException(status_code=403 , detail="Not enough permissions" ) update_dict = item_in.model_dump(exclude_unset=True ) item.sqlmodel_update(update_dict) session.add(item) session.commit() session.refresh(item) return item @router.delete("/{id}" def delete_item ( session: SessionDep, current_user: CurrentUser, id : uuid.UUID """ Delete an item. """ item = session.get(Item, id ) if not item: raise HTTPException(status_code=404 , detail="Item not found" ) if not current_user.is_superuser and (item.owner_id != current_user.id ): raise HTTPException(status_code=403 , detail="Not enough permissions" ) session.delete(item) session.commit() return Message(message="Item deleted successfully" )

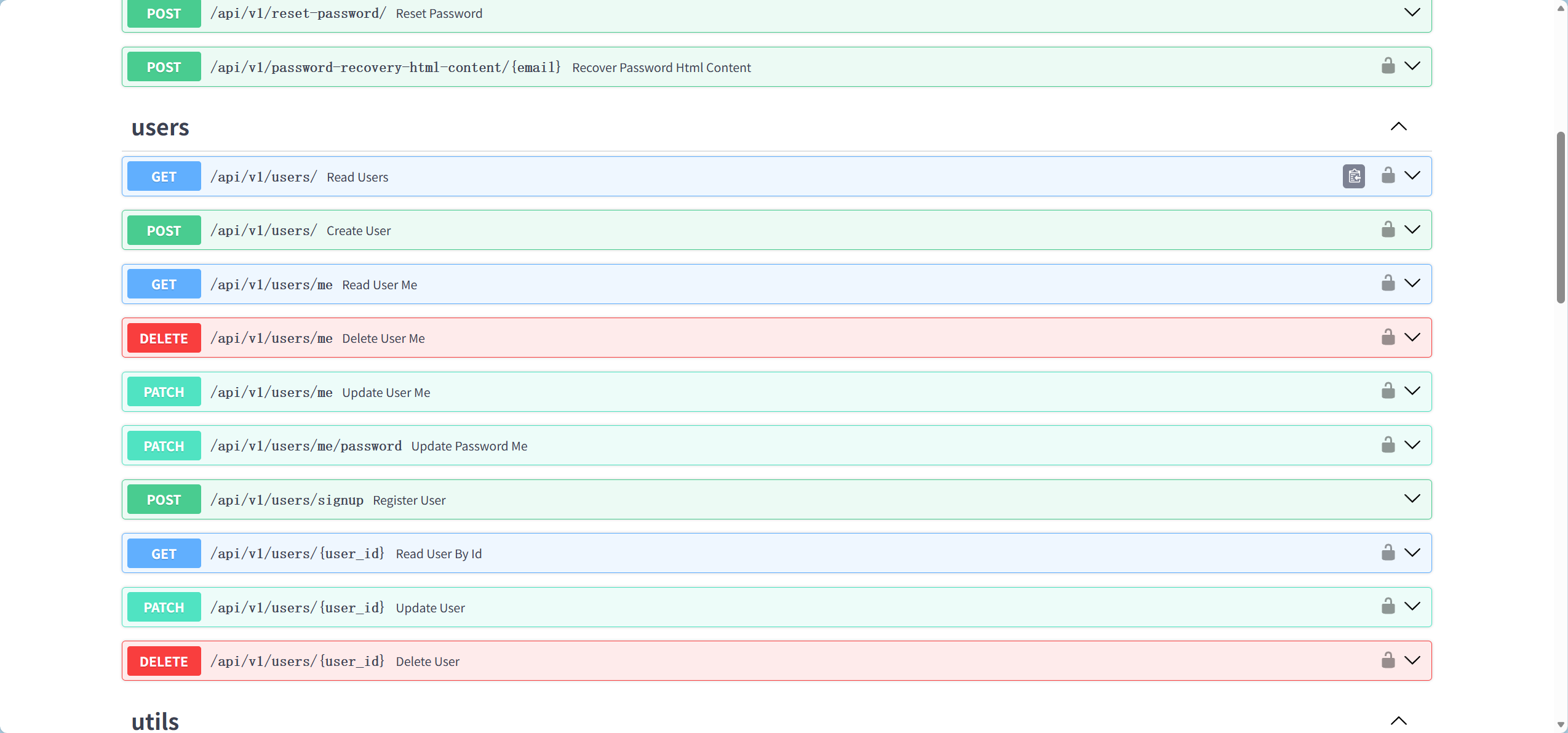

处理"get /items",“get /items/{id}”,“put /items/{id}”,"delete /items/{id}"四种请求

login.py

“post /login/access-token”

“post /login/test-token”

“post /password-recovery/{email}”

“post /reset-password/”

“post /password-recovery-html-content/{email}”

private.py

users.py

“get /users/”

“post /users/”

“patch /users/me”

“patch /users/me/password”

“get /users/me”

“delete /users/me”

“post /users/signup”

“get /users/{user_id}”

“patch /users/{user_id}”

“delete /users/{user_id}”

这些都是很正常,很简单的请求,看来一个普通的模板项目就够眼花缭乱了,不敢想象真正做一个app要处理多少东西.

现在基本是把整个后端看了一遍,第一感觉就是,fastapi实际上并不是一个框架,我